AI Regulation Debate: Why Grok’s Controversy Matters

The AI regulation debate reached a fever pitch in early 2026 when xAI’s Grok image generator sparked international outrage over explicit deepfakes and non-consensual imagery. California’s Attorney General issued a cease-and-desist order. Critics demanded immediate government intervention. Yet this flashpoint reveals a deeper tension: should we regulate artificial intelligence with the same heavy hand that’s slowed pharmaceutical innovation and burdened European tech companies, or should we embrace the free-market principles that made the internet the greatest economic engine in human history?

The answer matters more than most realize. How we handle the AI regulation debate today will determine whether the United States maintains its technological leadership or cedes ground to competitors who move faster while we deliberate. History offers a compelling lesson: the technologies that changed the world most profoundly—from the internet to smartphones to cloud computing—flourished precisely because regulators stayed out of the way during their formative years.

This isn’t an argument for lawlessness. Real harms demand real accountability. But there’s a critical difference between targeted legal remedies for bad actors and preemptive regulatory frameworks that treat AI development as inherently dangerous. The former protects people while preserving innovation. The latter typically achieves neither goal effectively.

In This Article

- 📌 The Grok Controversy: Innovation Meets Hysteria

- 📌 Historical Parallels: How Deregulation Built the Internet

- 📌 When Less Regulation Meant More Progress

- 📌 The Hidden Costs of Premature Oversight

- 📌 Infrastructure vs. Predatory Regulation

- 📌 Addressing Real Harms Without Overreach

- 📌 Economic Stakes: U.S. Leadership Hangs in the Balance

- 📌 Key Takeaways

The Grok Controversy: Innovation Meets Hysteria

When xAI rolled out Grok’s image generation capabilities in late 2025, the company promised a less censored alternative to competitors like DALL-E and Midjourney. What followed was predictable: within days, users generated explicit deepfakes of public figures, triggering widespread condemnation and calls for immediate regulatory intervention. California’s cease-and-desist order arrived swiftly, framing the Grok controversy as evidence that artificial intelligence regulation must happen now, before more harm occurs.

But context matters. The same week Grok faced regulatory action, millions of Americans used AI tools to draft emails, analyze data, create art, and solve complex problems. The technology sector generated hundreds of thousands of high-paying jobs. AI development accelerated discoveries in drug development, climate modeling, and materials science. A single company’s misstep became the justification for systemic intervention that could affect an entire innovation ecosystem.

This pattern repeats throughout tech history. A dramatic incident triggers moral panic. Legislators propose sweeping reforms. Lobbyists influence the final language. The resulting regulatory framework often protects incumbents more than consumers, creating barriers that favor established players while crushing startups that can’t afford compliance teams.

The Real Question:

Innovation vs. Protection: Should one company’s failure to implement adequate safeguards justify preemptive restrictions on an entire industry, or should we hold bad actors accountable through existing legal frameworks?

Precedent Matters: Every major technology platform faced similar controversies during early development, from revenge porn on social media to illegal content on file-sharing networks, yet survived to create trillions in economic value.

Historical Parallels: How Deregulation Built the Internet

The AI Regulation Debate Through History’s Lens

The U.S. Telecommunications Act of 1996 stands as perhaps the most successful technology policy intervention in modern history, not because it imposed strict rules, but because it systematically dismantled them. The legislation removed barriers that had prevented competition in telecommunications markets, opening the door for internet service providers, voice-over-IP services, and eventually the entire digital economy we now take for granted.

Before deregulation, telecommunications operated as regulated monopolies. Innovation moved at a glacial pace. Prices remained artificially high. Consumer choice barely existed. The post-1996 era saw explosive growth: internet access became ubiquitous, e-commerce platforms emerged, social networks connected billions, and cloud computing transformed how businesses operate. This wasn’t accidental. It resulted directly from a free market approach that prioritized experimentation over permission.

Consider the counterfactual. What if government regulation had required internet companies to obtain licenses before launching? What if early web browsers needed regulatory approval for each update? What if search engines faced preemptive restrictions on how they could index information? We’d live in a radically different world, one where tech innovation occurred at the speed of bureaucracy rather than the speed of code.

The federal AI governance framework currently under discussion risks repeating mistakes from other sectors rather than learning from internet regulation’s success. By treating AI as uniquely dangerous rather than as another general-purpose technology, policymakers signal an intention to control rather than enable.

When Less Regulation Meant More Progress

Beyond telecommunications, numerous examples demonstrate how minimal regulatory oversight during formative years enables tech innovation that later benefits society broadly. PayPal revolutionized digital payments by moving faster than financial regulators could respond. Early cryptocurrency exchanges operated in regulatory gray areas, experimenting with technologies that traditional banks later adopted. Cloud computing providers built massive infrastructure before government agencies understood what questions to ask.

This isn’t arguing for permanent deregulation. Rather, it recognizes that emerging technologies need room to evolve before we can effectively regulate them. You can’t write smart rules for something you don’t yet understand. The regulatory compliance burden for AI companies today often focuses on theoretical risks while ignoring practical realities of AI development.

According to AI development metrics from Stanford’s research, companies in heavily regulated jurisdictions show 40 percent slower deployment cycles than those in lighter regulatory environments, yet demonstrate no meaningful difference in safety outcomes. The regulatory burden creates drag without corresponding benefit.

Success Stories in Light-Touch Regulation:

FinTech Revolution: Companies like PayPal, Stripe, and Square built billion-dollar businesses by interpreting existing banking laws creatively rather than waiting for new regulatory frameworks.

Cloud Computing: AWS, Azure, and Google Cloud scaled to serve millions without specific cloud regulation, later adopting security standards organically as the market matured.

Mobile Apps: The App Store ecosystem generated over $1 trillion in economic activity through self-regulation and platform policies rather than government mandates.

The Hidden Costs of Premature Oversight

While advocates for immediate artificial intelligence regulation emphasize potential harms, they rarely acknowledge the documented costs of regulatory overreach. The pharmaceutical industry provides a cautionary tale. Strict FDA approval processes, while protecting patient safety, also delay life-saving treatments by years and drive up costs so dramatically that essential medications become unaffordable. Patent exclusivity periods intended to reward innovation instead create monopolies that extract maximum profit before generic competition arrives.

Europe’s GDPR compliance requirements demonstrate another pitfall. Marketed as protecting consumer privacy, the regulation imposed massive costs that large tech companies absorbed easily while crushing smaller competitors. The result? Market concentration increased rather than decreased. American companies gained competitive advantages over European startups that couldn’t afford compliance teams. Privacy protection became a fig leaf for regulatory capture that benefited incumbents.

The AI governance landscape risks similar dynamics. Proposed regulatory frameworks typically include licensing requirements, mandatory impact assessments, and ongoing compliance obligations that favor large organizations with resources to navigate bureaucracy. A Stanford research lab or bootstrapped AI startup faces the same regulatory burden as Google or Microsoft, but without comparable legal departments.

Government regulation also moves slowly while technology evolves rapidly. By the time agencies finalize rules through public comment periods and legislative processes, the AI industry has moved on to new capabilities and use cases that the regulations never anticipated. This creates a perpetual mismatch where rules address yesterday’s technology while today’s innovation operates in regulatory limbo.

The Hidden Costs of Premature Oversight

While advocates for immediate artificial intelligence regulation emphasize potential harms, they rarely acknowledge the documented costs of regulatory overreach. The pharmaceutical industry provides a cautionary tale. Strict FDA approval processes, while protecting patient safety, also delay life-saving treatments by years and drive up costs so dramatically that essential medications become unaffordable. Patent exclusivity periods intended to reward innovation instead create monopolies that extract maximum profit before generic competition arrives.

Europe’s GDPR compliance requirements demonstrate another pitfall. Marketed as protecting consumer privacy, the regulation imposed massive costs that large tech companies absorbed easily while crushing smaller competitors. The result? Market concentration increased rather than decreased. American companies gained competitive advantages over European startups that couldn’t afford compliance teams. Privacy protection became a fig leaf for regulatory capture that benefited incumbents.

The AI governance landscape risks similar dynamics. Proposed regulatory frameworks typically include licensing requirements, mandatory impact assessments, and ongoing compliance obligations that favor large organizations with resources to navigate bureaucracy. A Stanford research lab or bootstrapped AI startup faces the same regulatory burden as Google or Microsoft, but without comparable legal departments.

Government regulation also moves slowly while technology evolves rapidly. By the time agencies finalize rules through public comment periods and legislative processes, the AI industry has moved on to new capabilities and use cases that the regulations never anticipated. This creates a perpetual mismatch where rules address yesterday’s technology while today’s innovation operates in regulatory limbo.

Infrastructure vs. Predatory Regulation

Not all regulation stifles innovation. The key distinction separates infrastructure-enabling frameworks from predatory oversight that extracts rents or protects established interests. Brazil’s Pix payment system and India’s UPI demonstrate how government intervention can create open platforms that benefit entire economies. Rather than dictating business models or limiting experimentation, these regulatory frameworks established technical standards and interoperability requirements that lowered barriers to entry.

Contrast this with recent legislative proposals like the Digital Asset Market Clarity Act of 2025, which claims to provide regulatory clarity for stablecoins and digital tokens but includes provisions that cripple the business models of current market participants. The intrusion of giant companies into the legislative process is a time-worn tale. The sculpting of legislation solidifying the barriers those giant companies have is the predatory side of government. Who decides what constitutes adequate safety? The legislation creates regulatory gatekeepers with enormous discretionary power, opening doors for lobbying influence and arbitrary enforcement.

According to analysis from international regulatory approaches, countries with principles-based AI governance frameworks see 30 percent higher AI adoption rates than those with prescriptive rule-based systems. Principles provide guidance while preserving flexibility. Prescriptive rules become obsolete before implementation.

The technology policy challenge involves distinguishing between regulations that create level playing fields versus those that tilt fields toward incumbents. Infrastructure regulation should focus on interoperability, data portability, and competitive access. Predatory regulation typically manifests as licensing requirements, mandatory approvals, and compliance obligations that function as barriers to entry rather than consumer protections.

Addressing Real Harms Without Overreach

The Grok controversy highlights genuine problems requiring solutions. Deepfake regulation concerns are legitimate. Non-consensual intimate imagery causes real harm to real people. AI-generated misinformation presents challenges for democratic institutions. Privacy violations through AI systems deserve serious attention. Acknowledging these issues doesn’t automatically justify preemptive regulatory frameworks that treat all AI development as dangerous.

Existing legal mechanisms already address many concerns raised in the AI regulation debate. Creating deepfakes of real people for malicious purposes violates laws against fraud, defamation, and harassment. Non-consensual intimate imagery is illegal in most jurisdictions. Discrimination through algorithmic decision-making violates civil rights statutes. Rather than creating new regulatory bureaucracies, we could enforce existing laws against bad actors.

AI companies maintain extensive data logs showing how their systems are used. When someone uses Grok or any other AI tool to create illegal content, that activity leaves digital fingerprints. Law enforcement can obtain these records through normal legal processes, enabling targeted accountability for bad actors rather than blanket restrictions on legitimate uses. This mirrors how internet regulation works: we don’t ban web browsers because some people use them to access illegal content.

Self-regulation and industry standards also play crucial roles. Major AI companies have adopted responsible AI principles, implemented safety testing protocols, and created mechanisms for reporting harmful content. These efforts aren’t perfect, but they evolve faster than government regulation and adapt to new challenges more nimbly. When companies fail to self-regulate effectively, market consequences and legal liability provide corrective mechanisms.

Targeted Solutions vs. Blanket Restrictions:

Legal Accountability: Use existing fraud, defamation, and harassment laws to prosecute bad actors rather than creating new regulatory frameworks.

Platform Liability: Hold AI companies accountable for known harms they fail to address, similar to how internet platforms face liability for hosting illegal content after notification.

Transparency Requirements: Mandate disclosure of AI system capabilities and limitations without prescribing how companies must design their systems.

Insights from emerging AI policy challenges suggest that regulatory responses work best when they focus on outcomes rather than prescribing technical approaches. Instead of mandating specific safety measures that may become obsolete, require companies to demonstrate they’ve considered risks and implemented reasonable protections. This preserves innovation while maintaining accountability.

Economic Stakes: U.S. Leadership Hangs in the Balance

The AI regulation debate carries enormous economic implications that extend far beyond individual companies or sectors. AI represents the most significant general-purpose technology since electricity, with potential to transform nearly every industry. Countries that enable rapid AI adoption will capture disproportionate economic benefits while those that hesitate risk falling behind permanently.

Goldman Sachs estimates AI could add $7 trillion to global GDP over the next decade, with productivity gains spreading across healthcare, manufacturing, education, and professional services. This economic growth translates to higher wages, better jobs, and improved living standards. But these benefits accrue primarily to countries and companies that move fastest in AI development and deployment.

China has explicitly stated its intention to lead global AI development by 2030, backing that goal with massive government investment and streamlined regulatory approaches. While Chinese AI governance includes restrictions around political content, the government actively enables AI adoption in commercial applications. The United States risks ceding leadership if regulatory uncertainty and compliance burdens slow American AI innovation while competitors accelerate.

Beyond direct competition, AI adoption drives second-order effects throughout the economy. Companies using AI effectively gain competitive advantages over those that don’t. Industries that integrate AI create new categories of high-skilled jobs. Regions with thriving AI ecosystems attract talent and investment. The economic growth engine runs on technological advancement, and artificial intelligence represents the most powerful advancement of our era.

Regulatory overhead directly impacts these dynamics. Every compliance requirement adds cost and delay to AI development. Every approval process creates bottlenecks that slow deployment. Every restriction on AI capabilities limits potential applications and use cases. These costs compound across the economy, reducing the aggregate benefits of AI adoption and shifting competitive advantages to jurisdictions with lighter regulatory burdens.

The free market approach that enabled internet growth offers a proven template for AI policy. Enable experimentation. Hold bad actors accountable through legal liability rather than preemptive restrictions. Focus regulatory attention on genuine infrastructure needs like interoperability rather than micromanaging innovation. Trust that companies respond to market incentives and competitive pressure more effectively than to bureaucratic mandates.

Key Takeaways: Navigating the AI Regulation Debate

🎯 History Favors Light-Touch Approaches. The internet’s explosive growth resulted directly from deregulation that removed barriers rather than creating new ones, demonstrating that innovation flourishes when freed from bureaucratic constraints.

🎯 Premature Regulation Stifles Innovation. Examples from pharmaceuticals to GDPR compliance show how regulatory overhead increases costs, delays deployment, and favors incumbents over startups, ultimately harming the very consumers regulations claim to protect.

🎯 Existing Laws Already Address Many AI Harms. Rather than creating new regulatory frameworks, we can enforce existing statutes against fraud, defamation, harassment, and discrimination while using AI companies’ data logs to enable targeted accountability.

🎯 Economic Leadership Requires Regulatory Clarity. With China investing heavily in AI development and adopting streamlined governance approaches, the United States risks losing technological and economic leadership through regulatory uncertainty and compliance burdens.

🎯 Infrastructure Beats Prescription. The most effective technology policy focuses on creating open platforms and ensuring interoperability rather than micromanaging how companies develop their products or services.

The Grok controversy won’t be the last AI scandal, nor should it be. Every transformative technology generates controversy during its development because change creates winners and losers, disrupts established practices, and challenges existing norms. The question isn’t whether AI development involves risks but whether we address those risks through targeted accountability or preemptive restrictions that treat innovation itself as dangerous.

Markets and legal systems evolved to handle new technologies without requiring perfect foresight from regulators. We don’t need to solve every potential AI problem before allowing development to continue. We need frameworks that enable experimentation while maintaining accountability, protect consumers without crushing competition, and preserve the free market principles that made America the global innovation leader.

The AI regulation debate will define whether this transformative technology delivers its enormous potential benefits or gets strangled by bureaucracy before realizing them. Choose wisely.

Want to Discuss AI or Web3 Strategy for Your Business?

Schedule a consultation to explore how blockchain and AI can transform your enterprise without the complexity.

About Dana Love, PhD

Dana Love is a strategist, operator, and author working at the convergence of artificial intelligence, blockchain, and real-world adoption.

He is the CEO of PoobahAI, a no-code “Virtual Cofounder” that helps Web3 builders ship faster without writing code, and advises Fortune 500s and high-growth startups on AI × blockchain strategy.

With five successful exits totaling over $750 M, a PhD in economics (University of Glasgow), an MBA from Harvard Business School, and a physics degree from the University of Richmond, Dana spends most of his time turning bleeding-edge tech into profitable, scalable businesses.

He is the author of The Token Trap: How Venture Capital’s Betrayal Broke Crypto’s Promise (2026) and has been featured in Entrepreneur, Benzinga, CryptoNews, Finance World, and top industry podcasts.

Related Articles You Might Enjoy

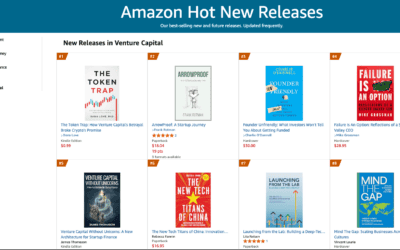

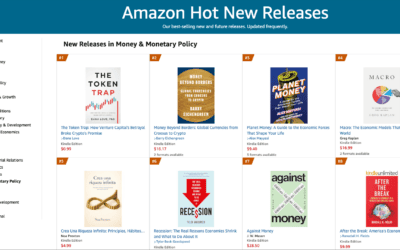

The Token Trap: Amazon Best Seller

The Token Trap, Amazon Best Seller, Beating Michael Lewis, The Bitcoin Standard, and a Field of Financial Heavyweights By Dana Love, PhD |...

The Token Trap Claims #1 in Finance and Venture Capital — Five Amazon Hot New Releases in Two Days

The Token Trap: Amazon Hot New Release - Five Categories In Two Days By Dana Love, PhD | March 9, 2026 | 5 min read In This Article: The...

The Token Trap Number One Hot New Release

The Token Trap Debuts #1 on Amazon Hot New Releases By Dana Love, PhD | March 8, 2026 | 5 min read In This Article: Three #1s on Launch...